insider threat

What is an insider threat?

An insider threat is a category of risk posed by those who have access to an organization's physical or digital assets. These insiders can be current employees, former employees, contractors, vendors or business partners who all have -- or had -- authorized access to an organization's network and computer systems.

The consequences of a successful insider threat can take a variety of forms, including a data breach, fraud, theft of trade secrets or intellectual property, and sabotage of security measures.

What are the different types of insider threats?

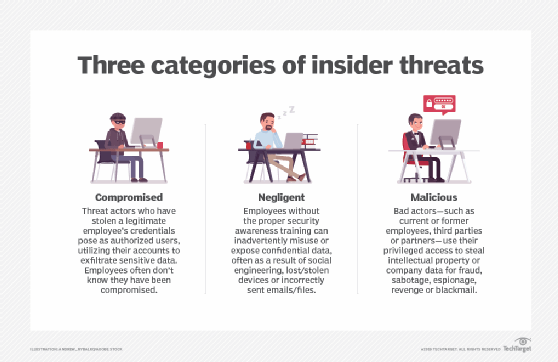

Insider threats are defined by the role of the person who introduces the threat. The following are examples of potential insider threats:

- Current employees could use privileged access to steal sensitive or valuable data for personal financial gain.

- Former employees working as malicious insiders could intentionally retain access to an organization's systems or pose a security threat by sabotaging cybersecurity measures or stealing sensitive data as a means of payback or personal gain.

- Moles are external threat actors who gain the confidence of a current employee to get insider access to systems and data. Often, they're from an outside organization hoping to steal trade secrets. Social engineering is a commonly used tactic to gain unauthorized access.

- Unintentional insider threats aren't caused by malicious behavior by employees but rather insiders who inadvertently pose a significant risk because they don't comply with corporate security policies or use company systems or data in a negligent manner. While unintentional, negligent insiders can open the door to external threats, like phishing attacks, ransomware, malware or other cyber attacks.

Why are insider threats dangerous?

Insider threats can be hard to detect, even using advanced security threat detection tools. This is likely due to the fact that an insider threat typically doesn't reveal itself until the moment of attack.

Also, because the malicious actor looks like a legitimate user, it can be difficult to distinguish between normal behavior and suspicious activity in the days, weeks and months leading up to an attack. With authenticated access to sensitive information, the insider exploit might not be apparent until the data is gone.

This article is part of

What is data security? The ultimate guide

With few safeguards preventing someone with legitimate access from absconding with valuable information, this type of data breach can be one of the costliest to endure.

The "2022 Cost of Insider Threats Global Report," a study produced by Ponemon Institute with Proofpoint sponsorship, noted that insider threat incidents have risen 44% over the past two years, with costs per incident up more than a third to $15.4 million. The report also noted that the time to contain an insider threat incident increased from 77 days to 85 days, leading organizations to spend the most on containment.

Who are the insiders?

The following are a few real-world examples of insider threats:

- In 2020, Twitter became compromised when accounts from Apple, Joe Biden, Elon Musk and several others began tweeting a bitcoin scam. After a thorough investigation, it turned out that a Twitter employee succumbed to a social engineering scheme that gave bad actors access to Twitter's platform.

- A former employee of a personal protection equipment manufacturing company was charged with illegally accessing and deleting shipping information in 2020 during the height of the COVID-19 pandemic. The U.S. Department of Justice Northern District of Georgia documents state that the former employee used one or more fake accounts created prior to termination to gain access to the network and shipping systems.

- In 2018, a former Cisco employee accessed company servers and deployed malicious code that deleted hundreds of virtual machines, affecting thousands of customers for weeks. Cisco claims it lost $2.4 million due to the cost to restore its damaged services and lost revenue due to customer refunds.

- Related to an elaborate ransomware scheme, a federal grand jury in the District of Nevada indicted a Russian national for conspiracy to bribe an employee with the purpose of introducing malicious software into the company's computer network, extracting data from the network and then extorting ransom money from the company under the threat of making this extracted data public. The target company in question happened to be Tesla -- and the goal was to steal company secrets for profit.

- A General Electric Company employee with aspirations to steal company trade secrets and use the knowledge to launch a competing business pled guilty to multiple charges. According to Federal Bureau of Investigation (FBI) reports, the employee downloaded thousands of files from the company's system, including ones that contained trade secrets. The access to these files, however, wasn't discovered until after the company began researching a new and unknown competitor that bid on the same Saudi Arabia-based project months later. This research led to the discovery that the competing company was started by its own employee.

What are the warning signs that could indicate an insider threat?

To build awareness and improve detection of insider threats, the following common signs could indicate the presence of inappropriate insider activity in an organization:

- disgruntled employee behavior, such as displays of anger, exhibiting a negative attitude or talking about quitting;

- evidence of a user trying to circumvent access controls;

- dismantling, turning off or neglecting security controls, such as encryption or maintenance patches;

- frequently working late or in the office during off-hours when few others are present;

- violation of other corporate policies that may not be related to computer use;

- accessing or downloading large amounts of data;

- accessing -- or attempting to access -- data or applications that are not associated with an individual's role or responsibilities;

- connecting outside technology or personal devices to organizational systems or attempting to transmit data outside the organization; and

- searching and scanning for security vulnerabilities.

How can you defend against insider threats?

The Ponemon/Proofpoint report identified areas where modern organizations may be exposing themselves to insider threat incidents:

- Authorized users -- both employees and contractors -- aren't properly trained or aware of specific laws or regulatory requirements related to data that they work with.

- Another potential vulnerability results from poor identity management and access control protocols. An abundance of privileged accounts is an ideal target for social engineering and brute-force attacks, such as phishing emails.

To fill these gaps, there are two main paths forward:

- Awareness and training program implementation. Employees should be properly trained on potential security risks so that they understand how to use the organization's systems safely and securely. Security teams should specifically be trained on insider threat detection. Doing so can help them to better spot suspicious activity and prevent data loss or damage from insider attacks before they occur.

- Detection and prevention security measures. In addition to improving employee training and awareness, most organizations have begun implementing insider threat programs that incorporate insider threat mitigation through detection, as well as prevention. This can be accomplished through compliance, security best practices and continuous monitoring.

Many cybersecurity tools can scan and monitor functionality to discover threats such as spyware, viruses and malware, as well as provide user behavior analytics.

Security controls can also be implemented to protect your data sources. Examples include encryption for data at rest, routine backups, scheduled maintenance and enforced two-factor authentication for password fortification.

In addition, identity management tools often automate user access revocation when an employee is terminated. These tools also provide greater control over what your employees have access to so access to sensitive data sources can be limited.

Insider threat detection and prevention

Organizations can take the following steps to protect their data sources:

- Protect your sensitive data and systems. There are several methods that can help protect a company's data and critical systems. Investing in data loss prevention tools is one option, but also consider data classification, vendor management, and other risk management and security policies that can better prevent data breaches.

- Implement behavioral analytics and employee tracking. As mentioned above, insider threats can be difficult to uncover using manual processes alone. Sophisticated tracking tools, like user and entity behavior analytics, and network detection and response platforms use artificial intelligence and machine learning to detect even minimal changes in patterns that humans may not see.

- Reduce the attack surface through continuous monitoring. Continuous monitoring tools and attack surface management (ASM) help by constantly scanning computing systems and networks, taking an inventory of vulnerabilities, prioritizing them and sending user alerts when action is required.

- Patch vulnerabilities. As new vulnerabilities are found, either through continuous monitoring, ASM tools or vendor notifications, patch systems immediately. The holes in your defenses are the perfect opportunity for external threats to gain a foothold in your ecosystem.

For additional detail on preventing insider threats, read about 10 ways to prevent computer security threats from insiders.